Renegade 2026 Keynote

The full keynote sessions are now available.

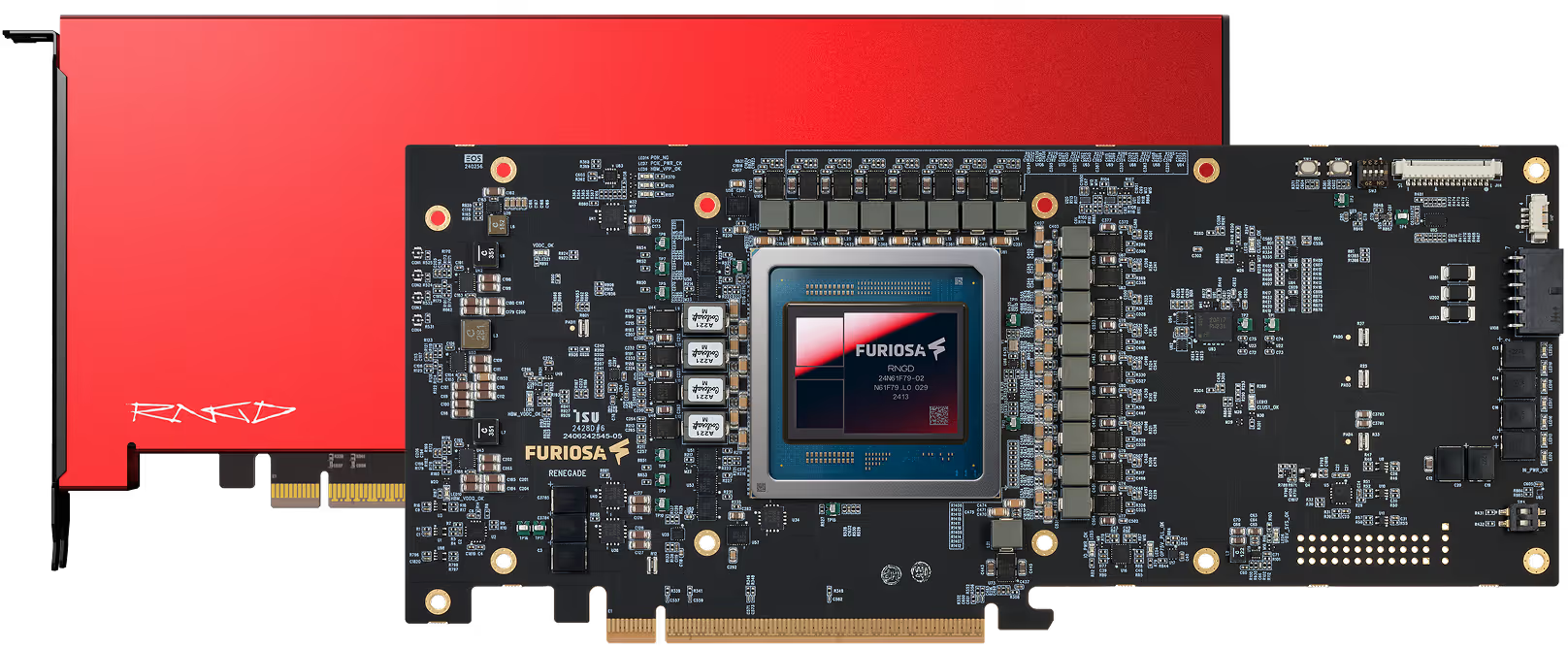

3 KW INFERENCE APPLIANCE FOR AGENTIC SYSTEMS

RNGD enables 4x more inference capacity

Max. # of servers per rack

5x

2x

Server power consumption

3 kW

7.5 kW

Tokens/s per rack

26,400 tokens/s

6,600 tokens/s

Max. # of users per rack

880

220

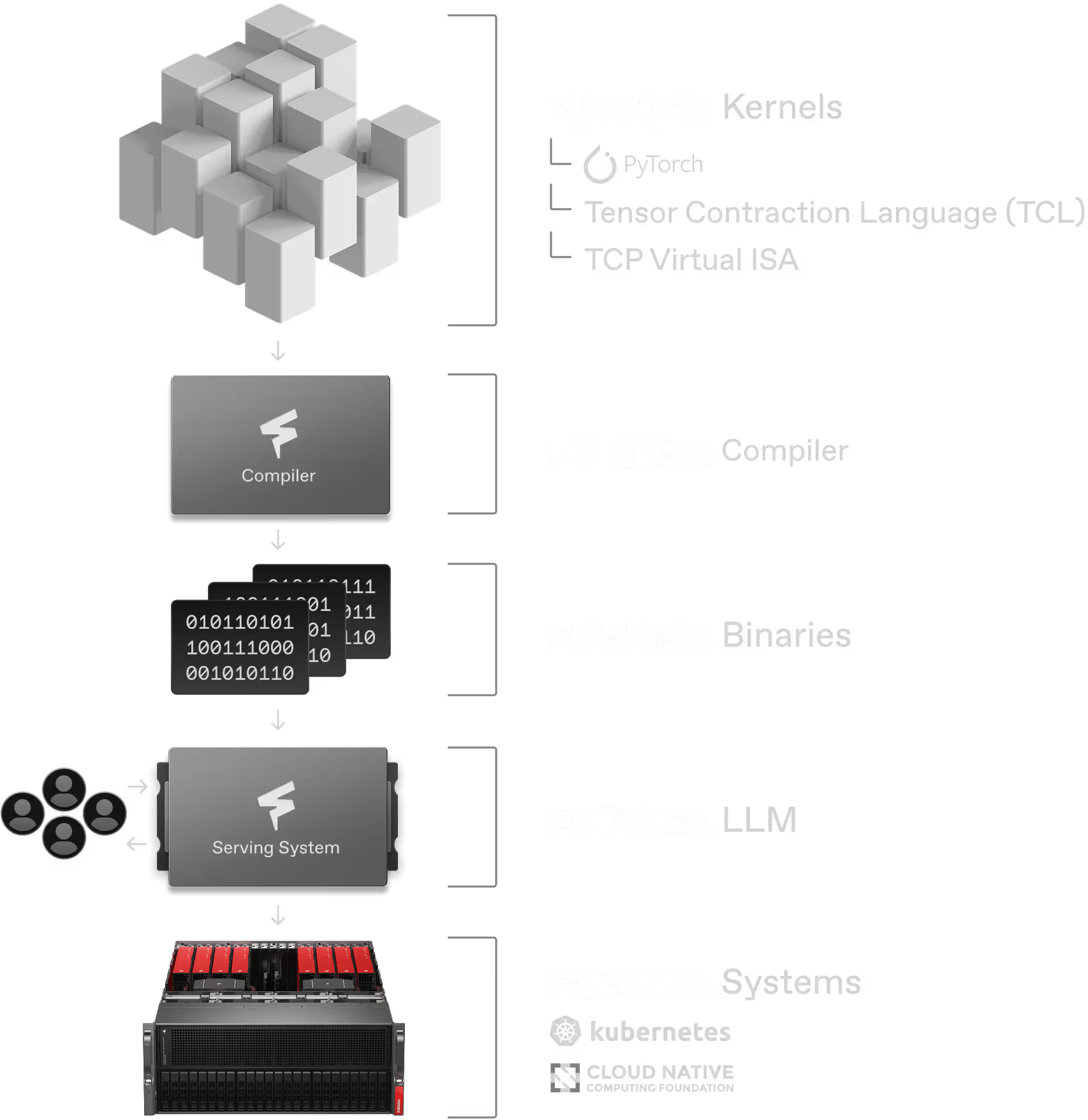

Tensor contraction, not matmul

The fundamental computation of modern day deep learning is tensor contraction, a higher dimensional generalization of matrix multiplication. However, most commercial deep learning accelerators today incorporate fixed-sized matmul instructions as primitives.

RNGD breaks away from that, unlocking powerful performance and efficiency.

INFERENCE WITHOUT CONSTRAINTS

Performance

Efficiency

Programmability

SOFTWARE FOR LLM DEPLOYMENT

Start testing with Furiosa Access

Blog

FuriosaAI partners with Broadcom to build next-generation inference platform for the Agentic Era

Experience RENEGADE Summit 2026

.jpg)